An idea was born

Building an application in just seven days might seem like an impossible task, but with a clear goal in mind, it's entirely achievable. This is the story of how I built WhisperWizard, a macOS application designed to speed up your writing with a smart voice-to-text, all within a week.

WhisperWizard was born out of two primary motivations. First, I wanted to add a feature into another application I had built (PaletteBrain), but didn't want to risk bloating it or disrupting the user experience by adding another feature to it. Second, I wanted to, once again, experience the thrill of shipping another product.

The Concept

The concept of WhisperWizard was simple yet powerful. It would allow users to record their voice and transcribe their spoken words into text using OpenAI's Whisper.

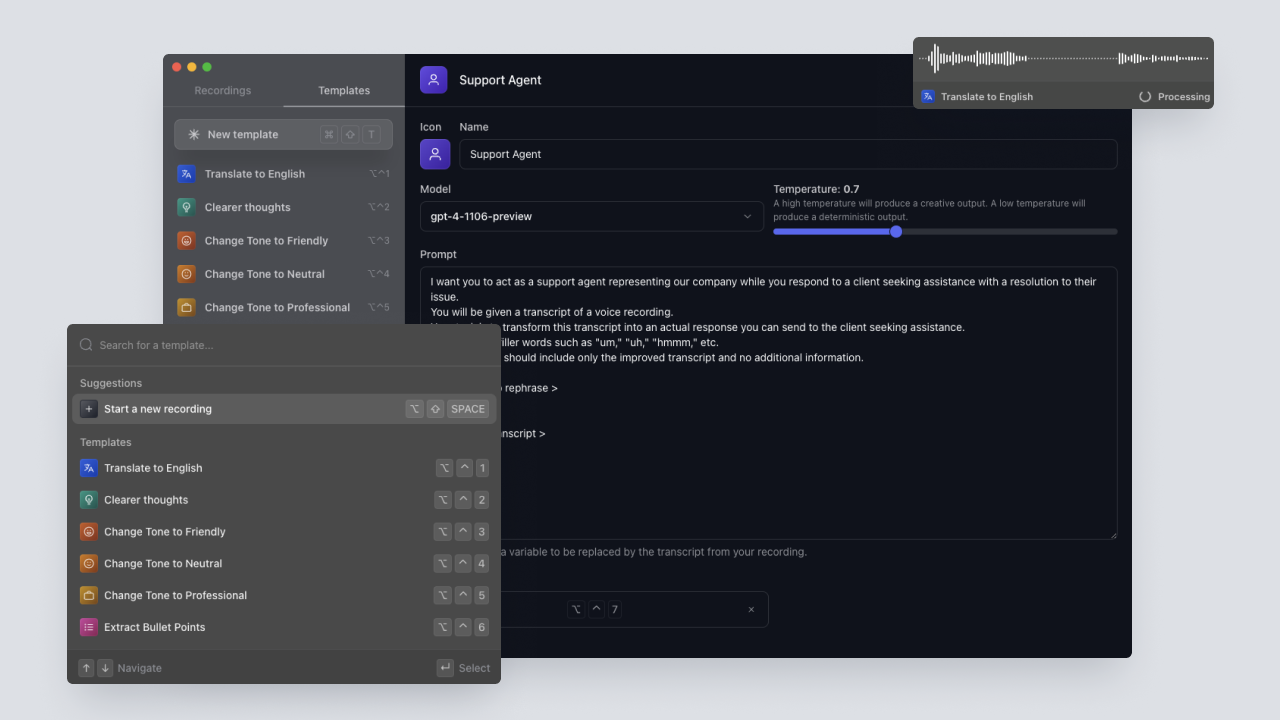

Users could also create their own prompt templates and assign shortcuts to these templates.

Once the transcription was complete, it would be processed through a custom ChatGPT prompt based on the user's chosen template.

The response from OpenAI would then pop right back into the original app where the shortcut was used.

Designing the User Experience

The journey began with sketching the overall design in Figma. The aim was to ensure that the application had a user-friendly interface and a great user experience.

The recording window of the application was designed to be simple, consisting of a recording waveform at the top and the current template chosen by the user below it. This design provided users with visual feedback that their voice was being recorded and that the application was functioning as expected.

Implementing Core Features

Once the design was finalized, I quickly coded the recording window and implemented the functionality to start and stop the recording. The next step was to incorporate Whisper from OpenAI, which would convert the user's recorded audio file into a transcript.

Following this, I implemented a global shortcut to start and stop the recording, ensuring that this shortcut was customizable by the user in the settings page.

The next major feature to be implemented was the ability to insert the transcript response into the original application from which WhisperWizard was triggered.

Customizable Templates

The next challenge was to allow users to create their own customizable templates for various tasks. For example, a user could create a template to translate their recording into a foreign language or to convert their recordings into a well-written email. I also made it possible for users to assign a custom shortcut to trigger a recording with a specific template.

After the template management was in place, I was able to use the transcript from Whisper and the user's custom prompt to run the prompt from the template through ChatGPT. This transformed the recorded speech into a well-written text for a given task.

Fine-Tuning and User Settings

I then created a settings page where users could update their OpenAI key, and modify the default WhisperWizard shortcuts.

Following this, I added a feature to display previous recordings, allowing users to replay their old recordings and access both the original and processed transcripts.

The final step was to create a window that would pop up just before initiating a recording. This feature would give users the flexibility to choose the template they wanted to apply to the upcoming recording. If the user chose not to select a template, they would simply receive the transcript.

The Final Stretch

Once all the features were successfully integrated, it was time to shift focus towards polishing the application. This involved a lot testing to identify and fix any potential bugs. This stage was crucial to ensure the application was ready to be released to the public!

And so, just like that, within a mere span of seven days, WhisperWizard transitioned from an idea to a fully functional application, ready for launch.